Hey Adopter,

This issue gives you the one move separating the 6% of teams pulling real profit from AI from the 94% stuck in pilot mode. You get the numbers, the cases, and a five-stage playbook used by global firms.

The 21 percent rule nobody mentions

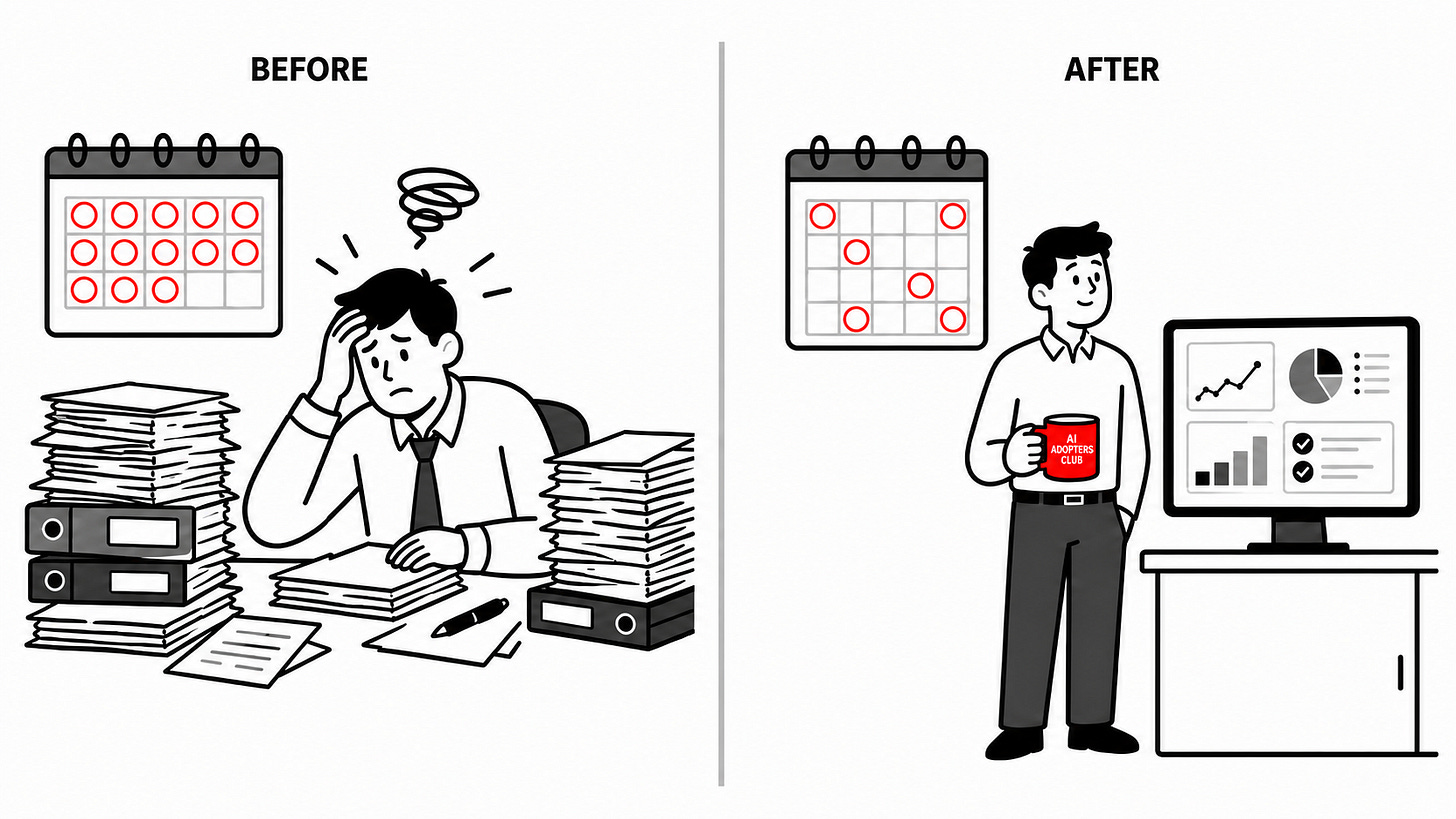

Most teams treat AI as a tool upgrade. Better chatbot. Smarter assistant. Faster summary.

That is the trap. McKinsey’s 2025 research on workplace AI shows only 21% of organisations have rebuilt workflows around AI. The other 79% bolt models onto processes designed for filing cabinets and email chains. The result is predictable. Half of all AI initiatives sit in pilot purgatory. Maturity across the board sits at 1%.

The top performers do something different. They are nearly three times more likely to redesign workflows end to end, 55% versus 20%. They also pull at least 5% EBIT impact from AI. The rest pull pocket change.

Tools are cheap, redesign is the moat

A randomised field experiment from INSEAD and Harvard Business School tested this directly. 515 startups got equal AI access and training. One group also got training on workflow reorganisation. That group generated 90% higher revenue, found 44% more use cases, won 18% more paying customers, and needed 40% less capital.

Same tools. Same access. Redesign was the multiplier.

Layering AI onto a brittle process creates new friction. Trust drops. Value caps below 5%. Failures here are 70% organisational, not technical. Worth asking this week, which workflow are we patching that should be rebuilt?

The DGRI playbook the top 6% are using

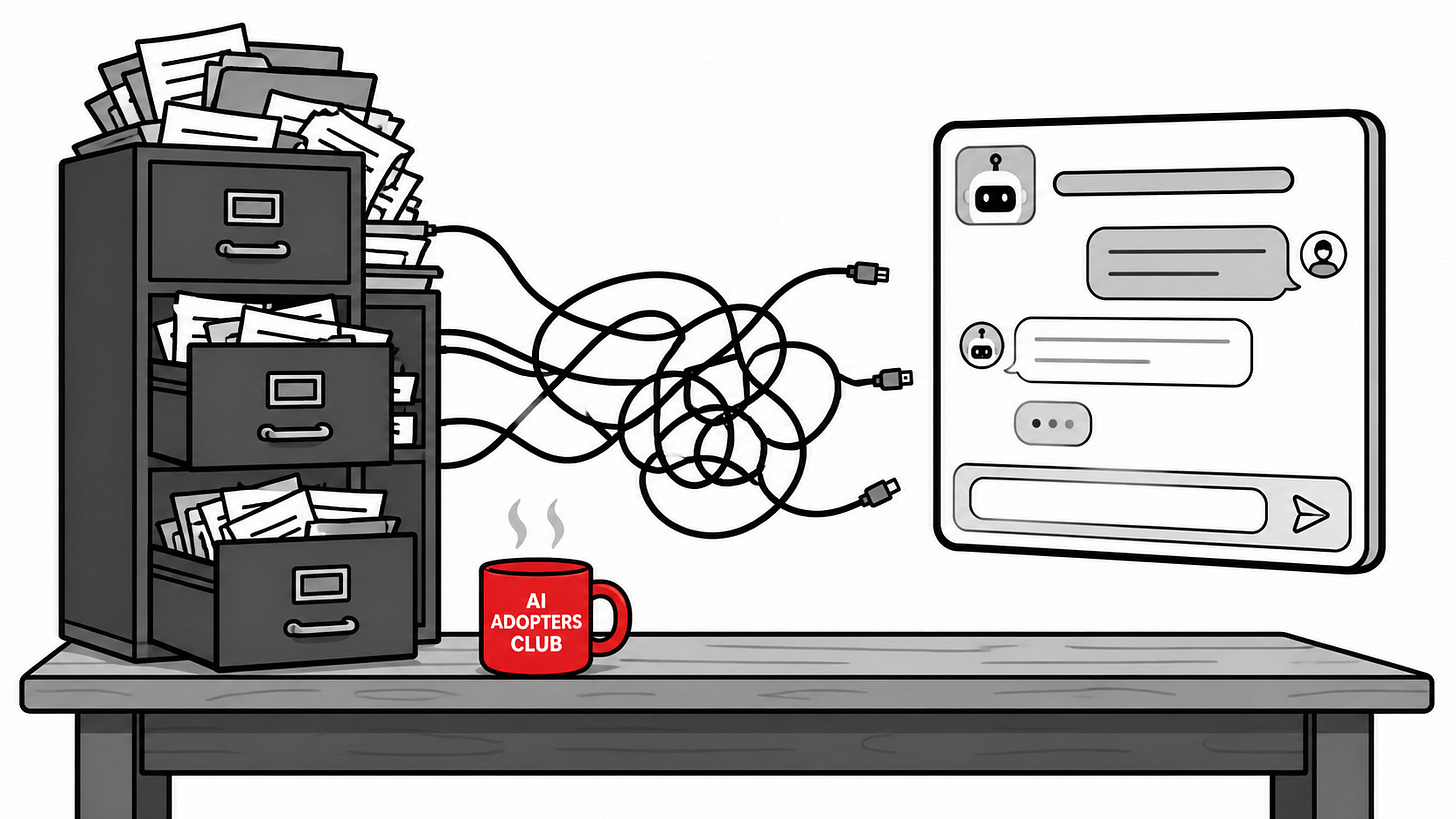

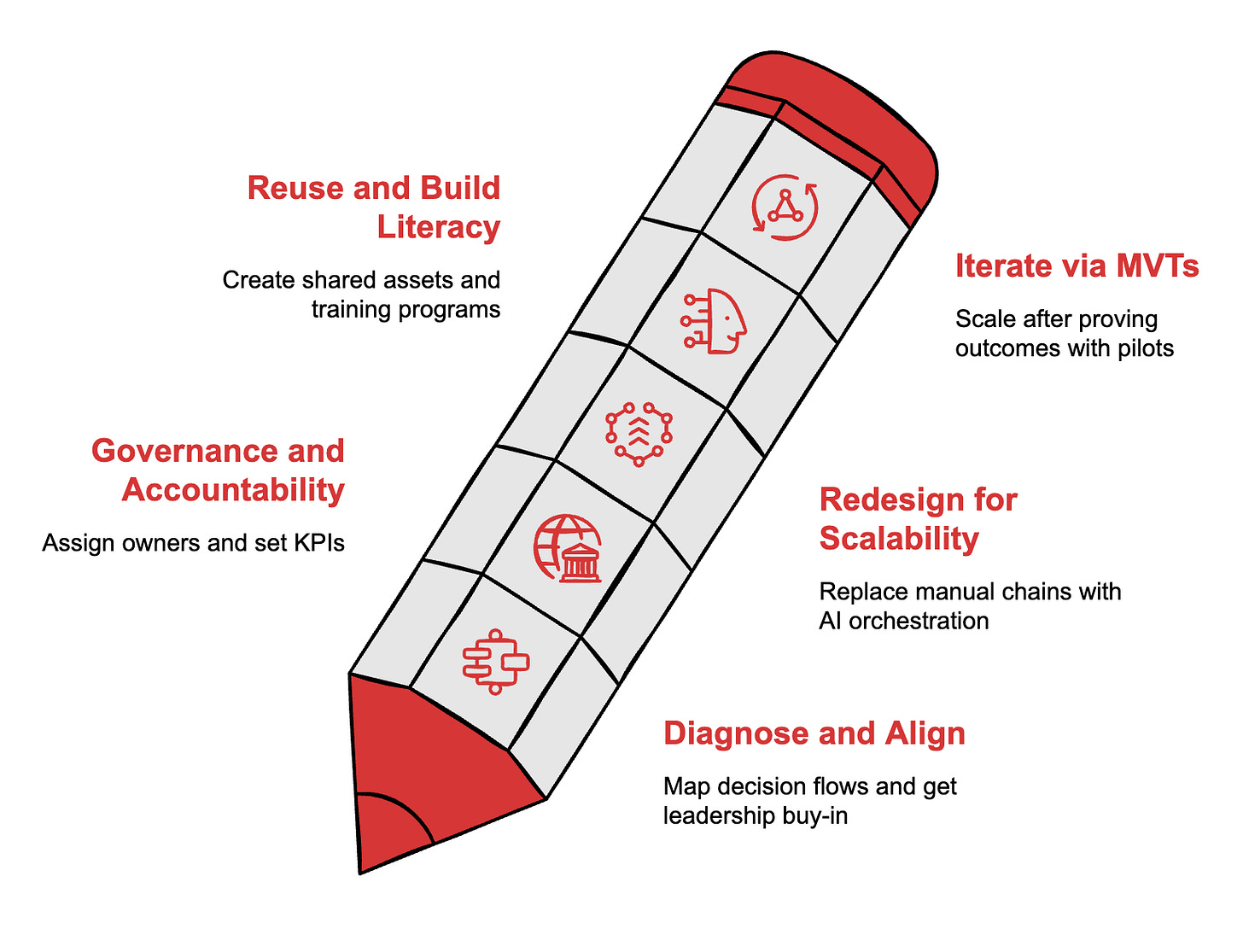

The California Management Review published a five-stage method called DGRI in late 2025, field-tested across global firms with measurable before-and-after results.

Stage 1, diagnose and align

Map decision flows in your top three workflows. Surface root inefficiencies. Get leadership buy-in on outcomes, not features. Most teams skip this and start with vendor demos. Do not.

Stage 2, governance and accountability

Assign data and model owners. Set KPIs. Build escalation paths with visible dashboards. Without owners, AI projects drift into politics and stall.

Stage 3, redesign for scalability

The multiplier stage. Replace manual chains with AI-orchestrated sequences. Route exceptions and judgment calls to humans. Build feedback loops that produce proprietary data. This is where the 90% revenue uplift comes from. Skip this stage and you have an expensive demo, not a workflow.

Stage 4, reuse and build literacy

Create shared feature stores, data dictionaries, and training programmes. Your assets compound when teams stop rebuilding the same prompts and pipelines from scratch.

Stage 5, iterate via MVTs

Treat pilots as learning vehicles. Minimum viable transformations. Scale only after outcome metrics prove out.

Real numbers from operating-model surgery

A global manufacturer cut its financial-close cycle from 12 days to 6. Manual adjustments dropped 40%. Audit fees fell 15%. None of that came from buying better software. It came from redesigning reconciliations and embedding alerts where humans used to chase reports.

JPMorgan Chase saved 360,000 legal hours a year through its COiN platform, and its $2 billion AI spend returned $2 billion in 2024 benefits. PwC reported 20% to 50% productivity gains across audit, tax, marketing, and IT after resequencing workflows around human judgment. Mayo Clinic automated lab tasks, increased test volume, and reduced staff burden.

These are operating-model wins, not prompt-engineering wins.

The superagency point most leaders miss

Satya Nadella has been clear on the design principle. AI works when human agency stays at the centre, not when it gets pushed aside. Demis Hassabis says the same. Breakthroughs come from the collaboration between people and algorithms, not the algorithms alone.

Employees use AI for over 30% of their work right now. That is three times what leaders estimate. Your team is ahead of you. Redesign closes the gap by giving humans the high-judgment work and giving AI the orchestration, parallelisation, and grunt analysis.

The agentic shift makes this urgent

Agentic AI from OpenAI, Salesforce Agentforce, and Google Gemini now runs full multi-step outcome loops. Legacy bolt-ons cannot support that. If your processes still depend on humans copy-pasting between tools, agents will sit idle or produce noise.

Three pitfalls to skip

Treating AI as IT. Procurement-led rollouts ignore workflow change and stay in pilot purgatory.

Bolting agents onto a process designed for humans. Agents need clean inputs and outcome ownership, not a slot in someone’s email queue.

Rewarding tool usage instead of outcome metrics. Bonuses that count “AI prompts sent” produce noise. Bonuses tied to time-to-outcome produce results.

Your move this quarter

Pick one workflow with a clear outcome metric. Proposal generation. Claims processing. Financial close. Whichever has the highest pain and the cleanest data.

Form a digital-steward team with a domain owner and a tech owner. Map the current decision flow. Mark which steps are AI-only, which need human judgment, and which need orchestration.

Set three metrics. Time-to-outcome. Exception rate. Reuse rate. Tie bonuses to workflow outcomes, not tool usage. Firms that invest over 20% of digital budgets in redesign see three to five times the returns of firms that do not.

Pilots fail because the workflow underneath them was never rebuilt. Fix that, and the rest follows.

Adapt & Create,

Kamil