A Kindergarten Teacher Just Taught the Best AI Prompting Lesson on the Internet, and It’s Not What You Think

Watch a peanut butter experiment expose why your most senior people struggle with AI

Hey Adopter,

A kindergarten teacher in a small classroom just delivered the sharpest AI prompting lesson I’ve seen this year. She doesn’t know it. She wasn’t trying to. She was teaching descriptive writing.

Watch the video. It runs about three minutes. Bread, peanut butter, jelly, a knife, and a teacher who promised her five-year-olds she would follow their written instructions exactly. No assumptions. No common sense. Nothing added.

The setup

Each kid wrote out how to make a peanut butter and jelly sandwich. The teacher performed the instructions to the letter.

“You get bread. You get peanut butter. You get jelly.” She picks up the bread. Picks up the jars. Holds them. Asks the class, “Did I make it?” They laugh.

“Spread jelly on the bread.” She spreads jelly with her fingers, because nobody mentioned a knife. “Oo, it’s crunchy,” she mutters to a room full of giggling kindergarteners.

A few more attempts pile jelly and peanut butter straight onto bare bread. No plate. No knife. No flipping. Just a mess on the table.

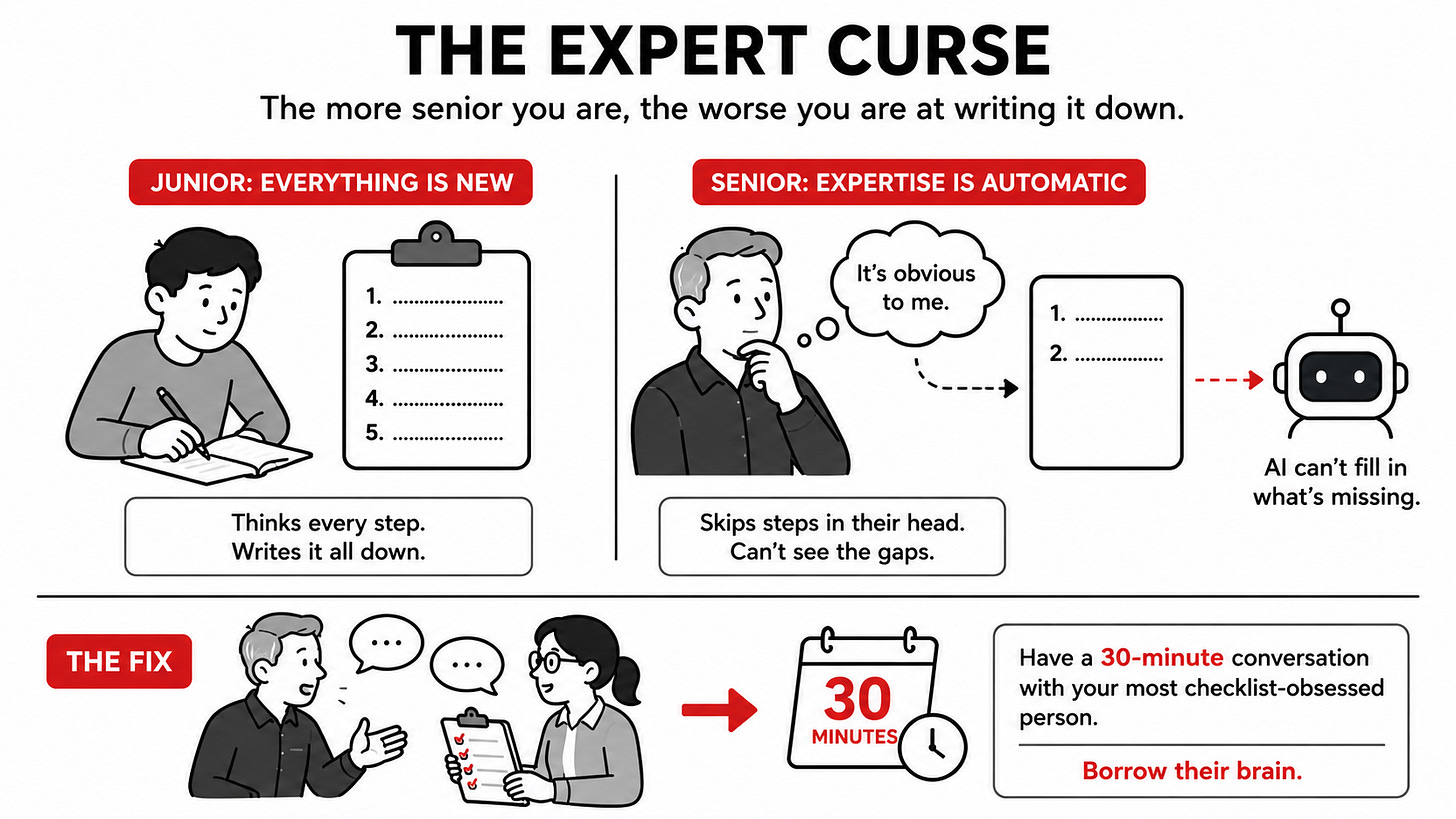

Then the class revises together. “Put bread on the plate. Grab the knife. Scoop peanut butter. Spread it. Get another piece. Put jelly on it. Flip it onto the first one.”

Sandwich. Music swells. Kids cheer.

The reversal

Here is what nobody in that room said out loud. The kids weren’t the ones being taught.

Every adult watching that video has the exact same problem with AI. You ask for “a polished email.” You ask for “better copy.” You ask it to “summarise this properly.” The model nods and hands you mush, because you forgot the plate. You forgot the knife. You forgot to flip the bread.

You assumed common sense. AI doesn’t have any.

The expert curse

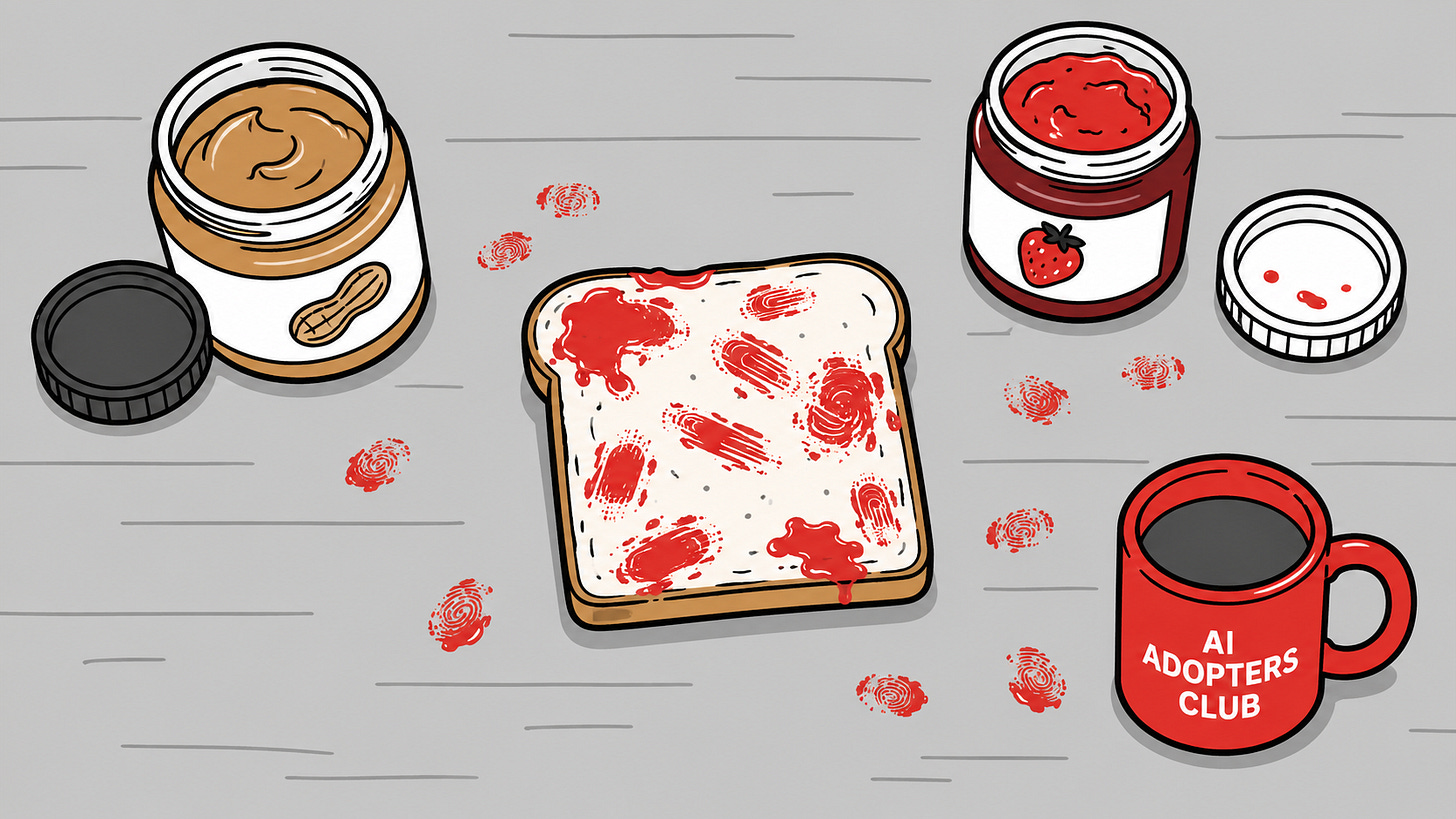

Here’s the uncomfortable part. The more senior you are, the worse you tend to be at this.

A junior analyst writing a procedure has to think through every step, because nothing is automatic to them yet. A 20-year veteran skips half the steps in their head, because their expertise has gone underground. They genuinely cannot articulate what they do anymore. Ask a senior salesperson how they close a deal and you’ll get vibes, not steps. Ask a marketing director what makes a campaign land and you’ll get taste, not a recipe.

That tacit knowledge is invisible to them. It’s invisible to your AI. It’s the gap where most “we tried AI and it didn’t work” stories actually live.

“The better you are at your job, the worse you are at telling AI how to do it.”

Who’s already good at this, who needs to catch up

Some roles have lived in checklists their whole career. Software engineers. Operations and process people. Pilots and surgeons. Lawyers drafting contracts. QA, audit, compliance. Anyone who has ever written a runbook or a standard operating procedure. These people moved their tacit knowledge onto paper years ago. AI feels native to them, because they were already translating expertise into instructions every day.

Other roles built their value on ambiguity. Executives selling vision. Marketers chasing taste. Salespeople reading rooms. Strategists pointing at the horizon. Most middle managers. Their work has rarely required a written procedure, and now they’re asked to brief a machine that needs one. The hierarchy quietly inverts.

If that’s you, the fix isn’t a prompting course. It’s a 30-minute conversation with the most checklist-obsessed person on your team. They’ve been dissecting workflows for years. Borrow their brain.

The kindergarten test

Here’s the easy lesson you can run today.

Pick one task you want AI to handle this week. Just one. Now write the instructions like you’re handing them to a five-year-old who has never made a sandwich. Plate. Knife. Order. Flip.

Then read your prompt out loud. Every time you catch yourself saying “obviously” or “you know what I mean” in your head, that’s a missing step. Add it.

Run that test once and you’ll see what your AI has been seeing for months. A pile of jelly on the table. A jar held in the air. A confused machine doing exactly what you told it to do.

A kindergarten teacher in a small classroom with a jar of jelly is doing better AI training than most consultancies on LinkedIn. Steal her method.

Adapt & Create,

Kamil

At least in the case of marketers, you’re doing them a disservice. Any good marketer has to learn how to give a brief to either external agencies or internal creatives. Any good marketer isn’t operating on vibes but on a whole bunch of metrics, carefully plotted plans and campaigns, and frequently orchestrating multiple layers of complexity at once.

Do all marketers understand the tech and how to get the best out of it? No. But then there are other reasons that get in the way of them wanting to adopt it, like bosses trying to tell them that they can replace junior staff with AI, completely ignoring the fact that the echelons above will have no pool of junior talent to promote into their ranks if they do so.

I’ve had a first career in marketing. I’m also damn good at prompting for the kind of results that can actually be used, and I am convinced my marketing experience is a huge help in that because I never assume an agency has knowledge I haven’t given them.