Your company needs an AI policy and these 3 prompts will build one today

Most businesses are flying blind on AI. Here is your 30-minute fix.

Hey Adopter,

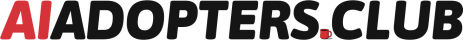

78% of employees use AI tools their employer never approved. 93% paste company data into those tools. 91% believe doing so is perfectly safe. (2025 WalkMe/SAP survey)

The cost of that confidence gap? Shadow AI breaches run $670,000 more than standard incidents, per IBM’s 2025 Cost of Data Breach Report. Samsung found out the hard way when engineers pasted proprietary source code into ChatGPT and it was absorbed into training data, gone forever, no retrieval.

Meanwhile, 145 AI-related laws passed across US states in 2025. The Colorado AI Act takes effect June 30, 2026 with $20,000 per violation penalties. Texas TRAIGA is already live.

Only 11% of organisations have any responsible AI capabilities in place.

Good news: you can fix that in 30 minutes, for free, using the tools your team already has.

AI can write its own rulebook

The NIST AI Risk Management Framework is free, voluntary, and backed by the US government. Both Colorado and Texas explicitly give legal safe harbour to companies that follow it.

You do not need to read all 42 pages. You need the right prompts to turn it into a working policy.

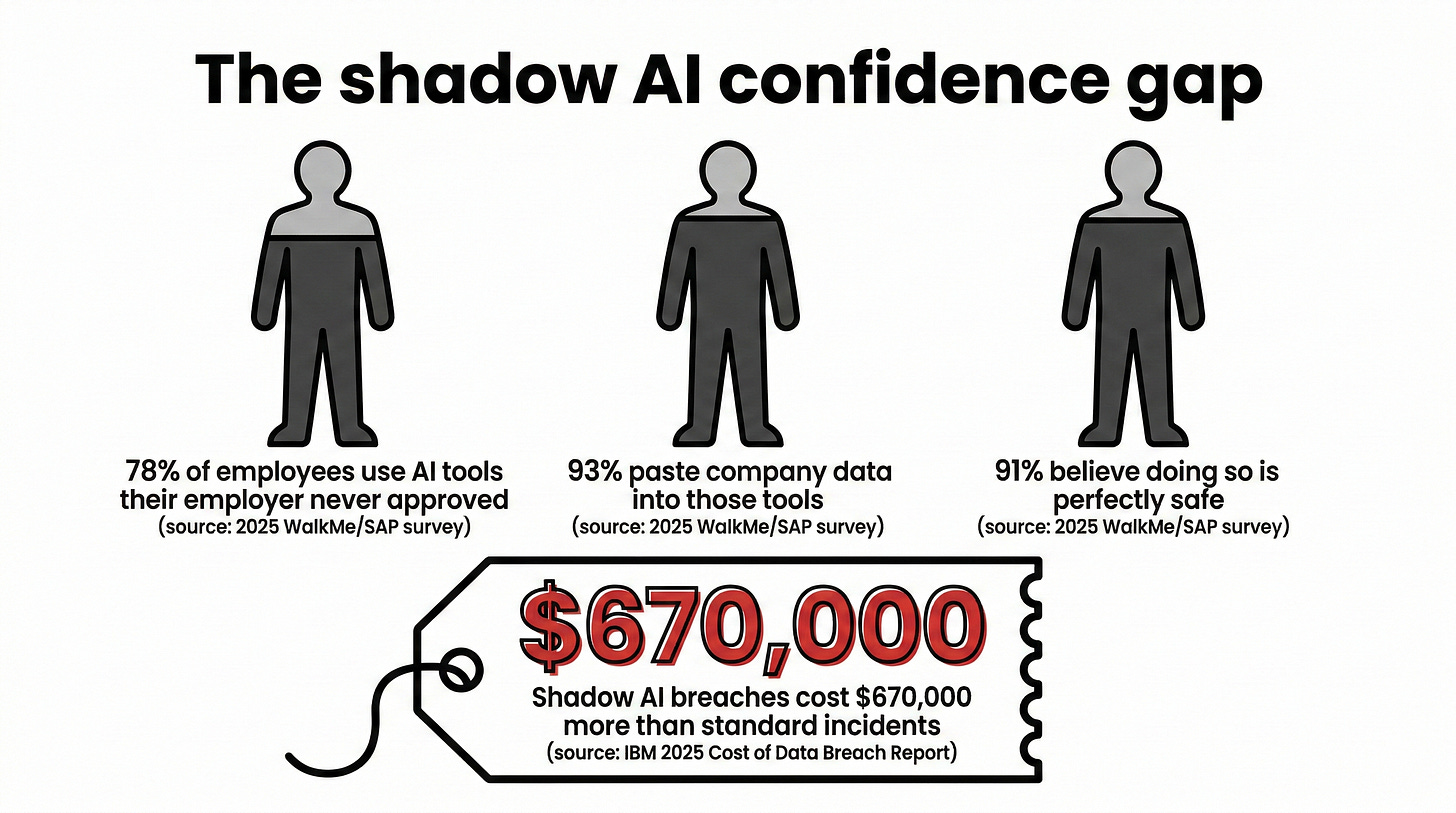

I have built a 3-prompt sequence you can run in Claude, ChatGPT, or Gemini. Each prompt feeds the next. Run them in order, customise the bracketed sections, and you will walk away with five usable governance documents.

Without these prompts, governance setup takes 6-12 weeks and $10K-$50K in consulting fees. With them, you get a structured first draft in a single sitting.

If you advise clients on AI, run these prompts with their company details and you have a deliverable most firms charge $5K-$15K to produce from scratch.