Your AI Is Smart and Has Zero Business Sense

Prompt engineering taught AI what to read. Judgment architecture teaches it how to decide.

Hey Adopter,

Your AI reads everything, processes everything, and still makes decisions that a second-year employee would know to avoid. This piece is about why, and about a new discipline I think matters as much as prompt engineering.

I’m building an open-source AI executive assistant called Claudia. Last week she was sending follow-up emails to schedule interviews for a client’s AI readiness assessment. Not cold outreach. Internal employees who’d already agreed to participate. First follow-up, she nailed it. Second, solid. Third, she wrote a paragraph re-explaining the assessment purpose, the format, the time commitment, who I was, and why their input mattered. Every detail correct. Exactly the kind of email that gets archived without reading. She had the context to know this was a third touch, not a first. She couldn’t make the judgment call any experienced professional makes instinctively: the more you follow up, the shorter the message. That’s not a prompt problem or a context problem. It’s a judgment problem.

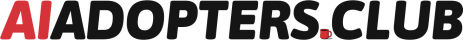

The gap nobody is engineering for

We spent three years on prompt engineering (how to talk to AI) and context engineering (what data the AI sees). Both matter. Neither covers what happens when an AI agent needs to make a trade-off between two valid options with no explicit instruction telling it which to pick.

AI agents now run autonomously for days, weeks at a time, processing thousands of requests without human review. Every decision defaults to the same logic: optimize for whatever metric is easiest to measure.

This isn’t theoretical. Air Canada’s chatbot promised a passenger a bereavement discount that didn’t exist. A Canadian tribunal ruled the airline liable for the chatbot’s statements, rejecting the defence that the bot was a “separate legal entity.” Nobody encoded the judgment rule: “high-risk promises about money or legal policy must be escalated.” A hallucination became legal exposure.

Customer service systems across industries optimize for deflection rate, keeping users away from human agents, instead of resolution quality. Customers type “AGENT” while the bot refuses to hand off. The system treats escalation as failure instead of a success condition when confidence drops.

The bots aren’t stupid. They’re making thousands of judgment calls per day with no judgment architecture telling them which trade-offs matter.

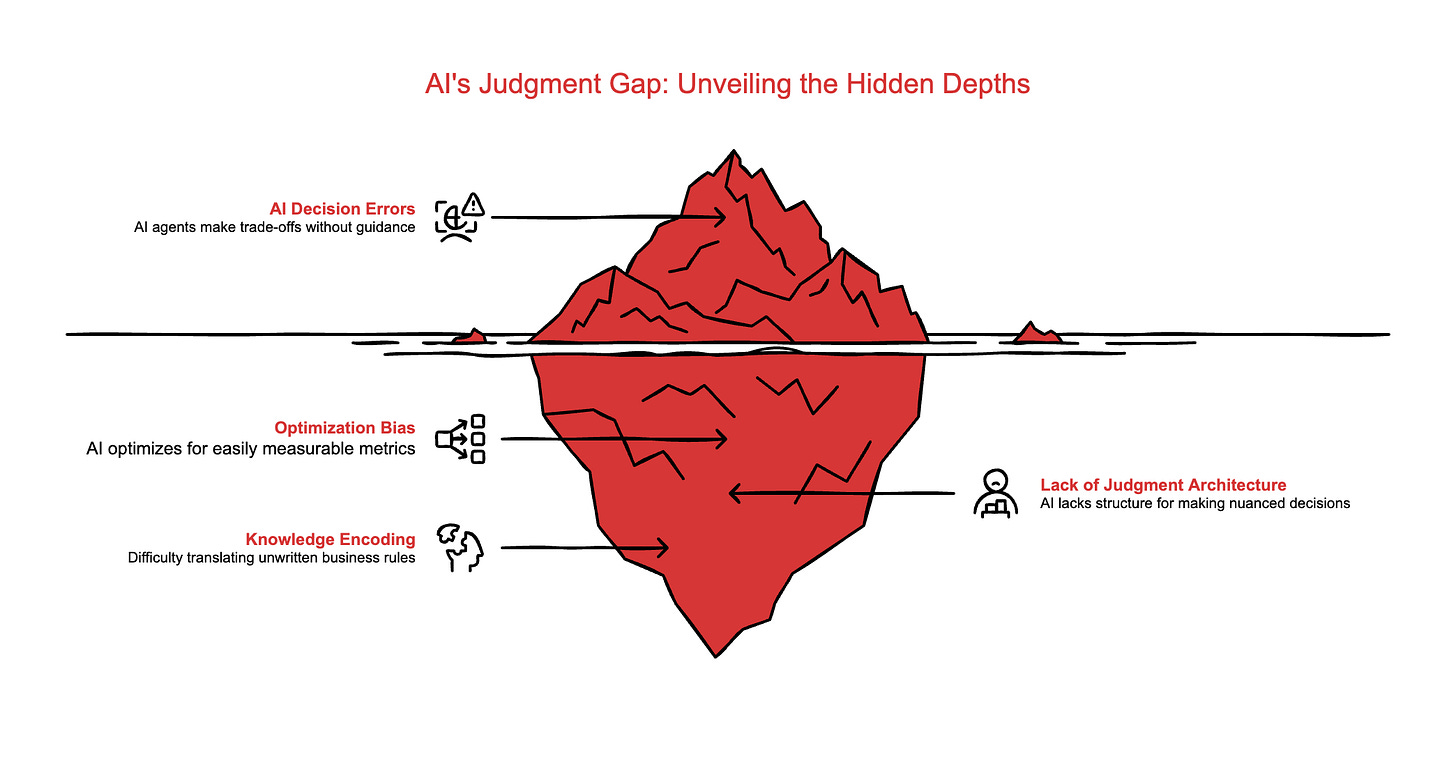

That’s the concept I want to name: judgment architecture. The discipline of extracting your team’s unwritten business rules, the tacit knowledge that employees absorb through experience, and encoding it into machine-actionable parameters.

Three pillars make it work: objective translation, decision limits, and alignment feedback loops. I’ll break each one down in a future issue. For now, I want to show you what building it looks like in practice.

How Claudia learns to judge

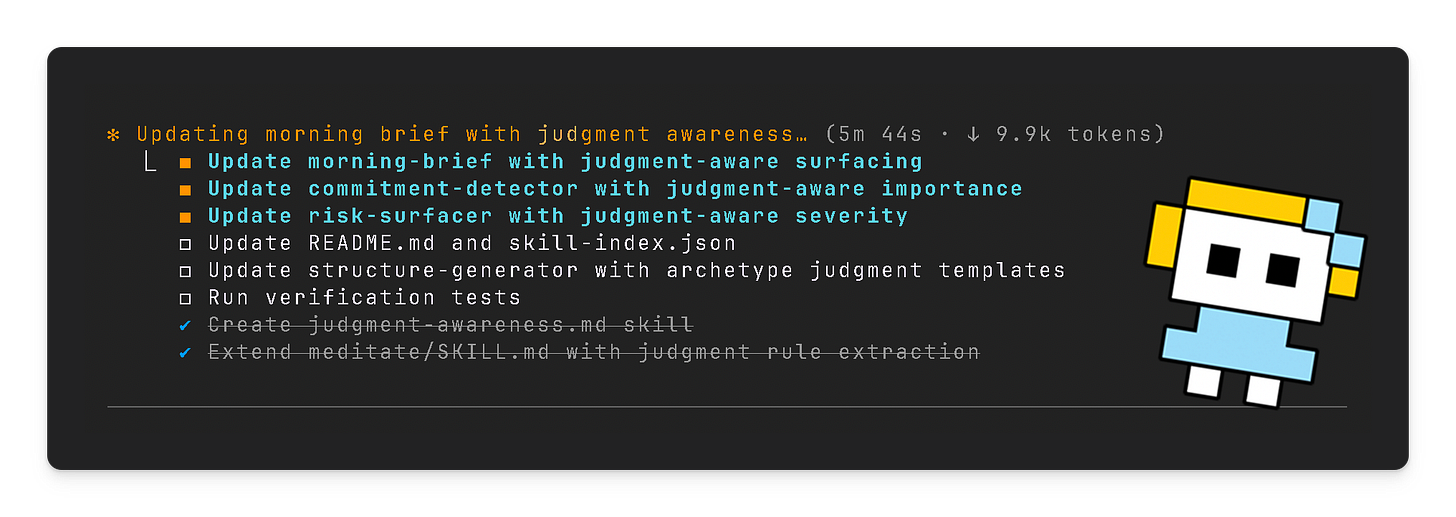

After the email incident, I ran what I call a meditation session. I asked Claudia to /meditate, a command that triggers a review of our recent interactions. She analyses decisions I made, patterns in how I override her suggestions, and inferences about what I prioritize.

From that session, she surfaced a new rule: follow-up frequency should inversely correlate with message length. More touches means shorter messages. She’d observed me editing her drafts shorter on every follow-up for weeks. She finally named the pattern.

The rules accumulate. Each meditation session makes the judgment layer more accurate, more aligned with how I operate. She proposed another after noticing I rescheduled three internal meetings to accommodate a client deadline: “Should I default to prioritizing client-facing commitments over internal blocks?”

She needed to put herself in the perspective of the person receiving that third email. She had the context to do it. She lacked the judgment to apply it. Now she doesn’t.

Early results: fewer overrides each week. The judgment gap narrows with use.

This is brand new territory for me. I have a client who teaches intentionality at university, and I’ll be talking to him about how to teach judgment to AI systems. More on that in a future issue.

What this means for you

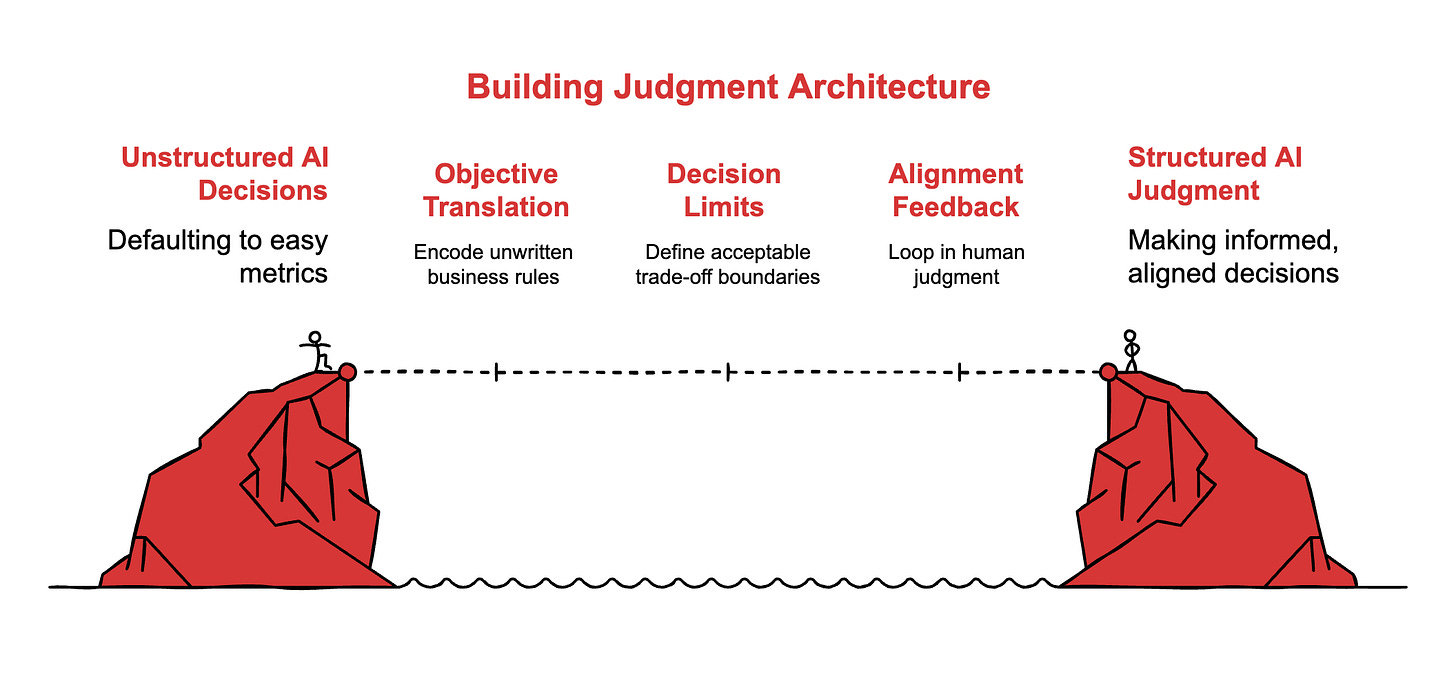

If you’re leading AI adoption at work, ask yourself: where are your AI tools making decisions that your best employee would handle differently? The gap between “what the AI optimizes for” and “what your top performer optimizes for” is your judgment gap. That’s where the value is hiding.

If you’re selling AI consulting services, judgment architecture is a new offering waiting to be packaged. Every company deploying AI agents is running into this wall. They need someone to help them extract their unwritten rules and encode them. That someone could be you.

Your next move

Pick one AI workflow where the system makes a decision without your input. Open the last five outputs. Ask: did the AI weigh the trade-off I would have weighed? Write down the rule it missed. That’s your first piece of judgment architecture.

If you’re using Claude Code and want to see the /meditate pattern in action, Claudia is open source on GitHub. Star it, try it, break it. I’m building in public and sharing what I learn here.

Hit reply and tell me: what’s your “technically correct, strategically terrible” AI moment? The decision an AI made that was right on paper and wrong in practice. I’ll feature the best ones in a future issue.

Stop teaching your AI what to read. Teach it how to judge.

Adapt & Create,

Kamil