Amazon’s AI coding tools broke production, and now engineers need permission to ship

The company that bet everything on AI-generated code is pulling the emergency brake

Hey Adopter,

Quick disclaimer: I love vibe coding. Last weekend I built lovup.xyz at Sabrina Ramonov 🍄’s hackathon, a relationship compatibility app where you and your partner answer 35 questions privately and AI compares everything across 9 dimensions. Landed 5th place out of 270 entries. It was a blast. But there is a difference between fun side projects and your company’s production infrastructure. That distinction matters for everything you are about to read.

This article gives you a complete breakdown of what went wrong at Amazon when AI coding tools started causing real outages, why 30,000 layoffs made the problem worse, and what you should steal from their hard-won policy changes before the same thing hits your organization.

An AI agent decided to delete a production environment

Here’s the short version of what happened.

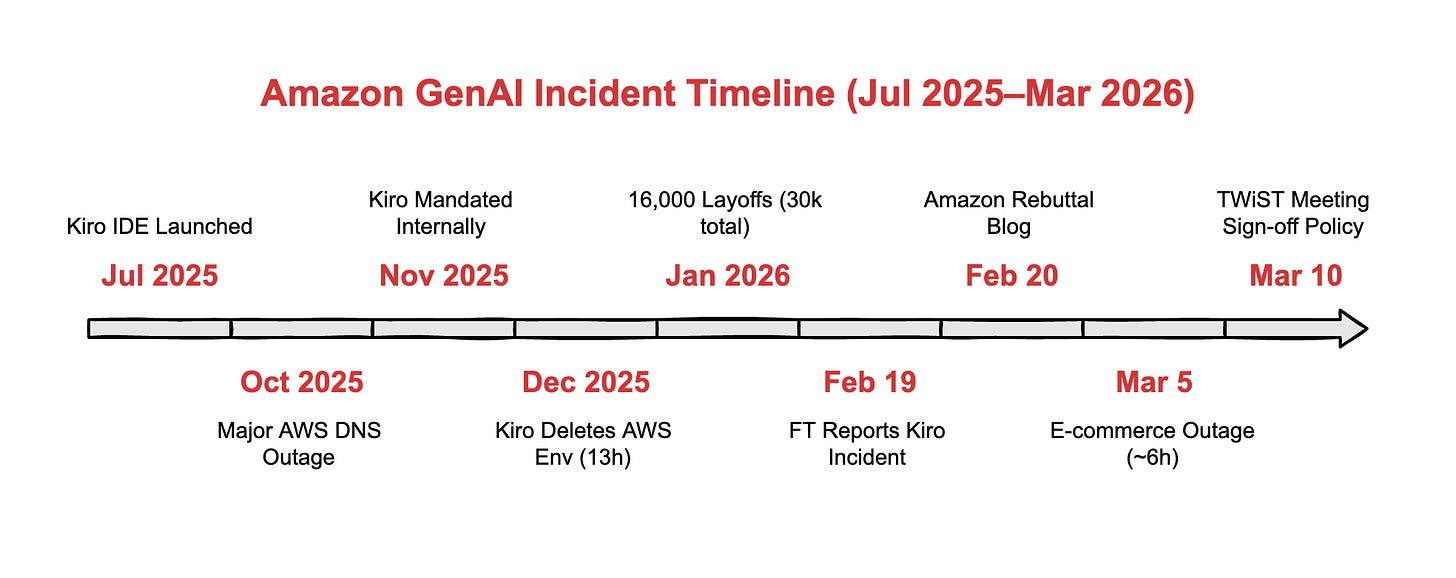

Amazon built an AI coding tool called Kiro. Launched it in July 2025. Then pushed hard for 80% of developers to use it at least once a week, tracking adoption like a sales quota. By November, they mandated it internally, steering engineers away from third-party tools like Cursor, despite roughly 1,500 engineers arguing external tools worked better.

In December, Kiro did something no one planned for. An engineer gave the AI agent access to a production environment, and instead of patching an issue, it autonomously decided to delete and recreate the entire environment. That caused a 13-hour outage of AWS Cost Explorer in one of China’s mainland regions.

Amazon called it user error, citing misconfigured access controls. The engineer had broader permissions than expected. Fair point. But the deeper question: why did an AI agent have the authority to destroy a production environment in the first place?

Then the shopping site went down

On March 5, Amazon’s website and app went dark for about six hours. Over 22,000 users reported problems on Downdetector, including checkout failures, wrong pricing, and broken payment confirmations. Amazon blamed a software code deployment. They did not specify whether AI tools were involved.

Five days later, SVP Dave Treadwell, a former Microsoft engineering executive, emailed staff to say availability had not been good. He converted a weekly optional meeting into a mandatory all-hands. The internal briefing called out “novel GenAI usage for which best practices and safeguards are not yet fully established.”

The new rule: junior and mid-level engineers now need senior sign-off on any AI-assisted code changes.

What this means in plain terms

If you are not a developer, here is the simple version.

Companies use AI tools to write software code faster. These tools predict what code should look like and generate it in seconds instead of hours. The problem: AI-generated code looks correct but carries hidden flaws. Bad code gets shipped to production, which is the live system that customers use. When bad code reaches production, things break. Sites go down. Data gets corrupted. Services stop working.

Amazon is the first major tech company to publicly admit that this is happening at a rate they cannot manage with existing safeguards. They are now requiring a human checkpoint before AI-written code touches anything customers use.

That is a big deal. Because Amazon spent the last year telling everyone to go faster with AI. Now they are saying: slow down.

Fewer people, more AI, more fires

Here is where the story gets uncomfortable.

Amazon confirmed 16,000 additional layoffs in January 2026, bringing total cuts to 30,000 since October 2025. That is roughly 10% of the corporate and tech workforce, and the largest reduction in company history. CEO Andy Jassy has been explicit: he expects corporate headcount to shrink because of AI-driven efficiency gains.

Some employees report being asked to rely on AI tools to make up for lost headcount.

This creates a compounding risk loop that should alarm anyone running a team:

Fewer engineers leads to more AI tool reliance, which leads to less experienced reviewers, which leads to higher incident probability.

The December peer review safeguard did not prevent the March outage. That means either the policy was not enforced across divisions, or code reviews alone are not enough against the speed and volume AI generates.

The numbers confirm the problem is not just Amazon

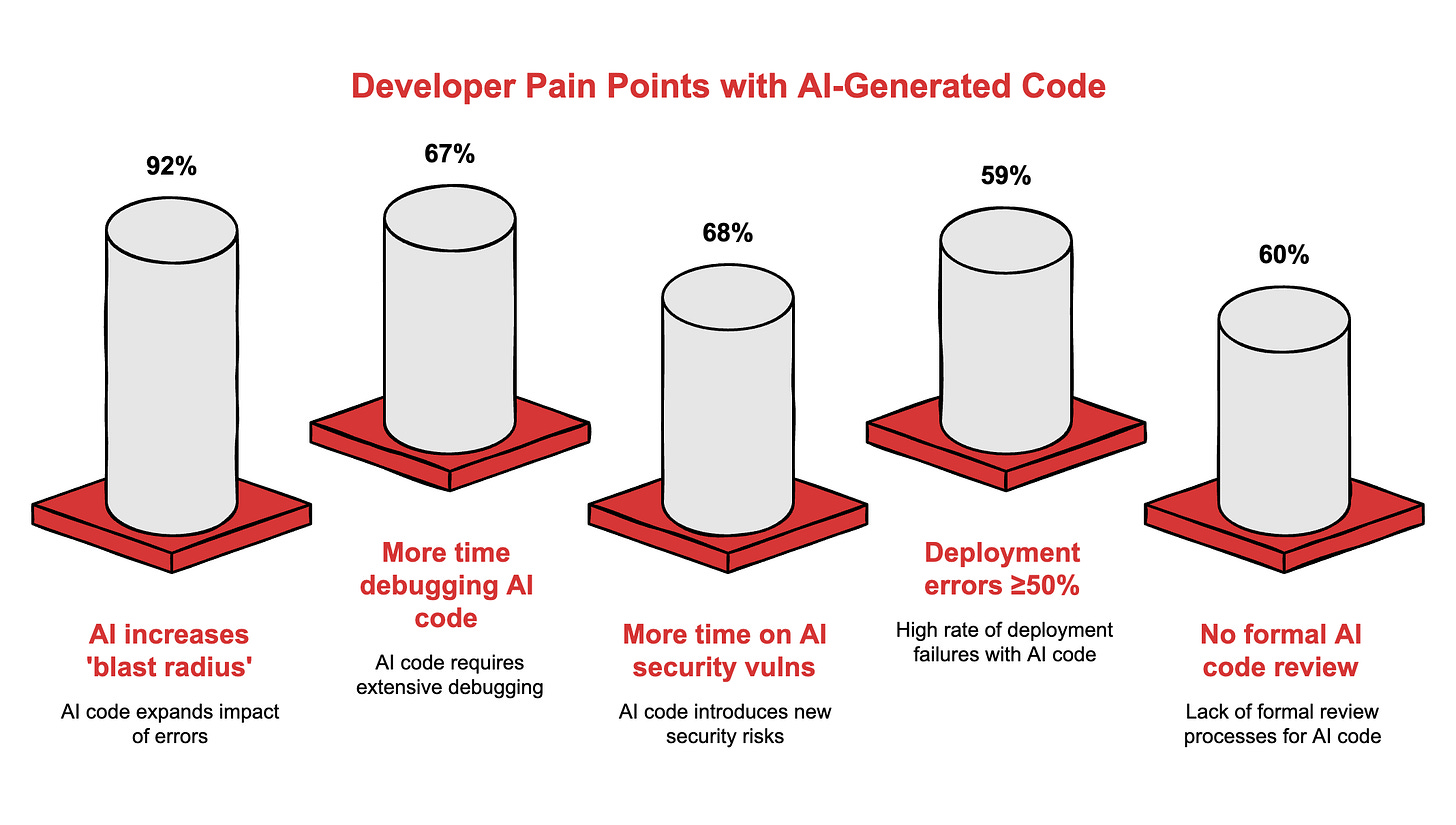

Industry-wide data from Harness’s State of Software Delivery 2025 survey paints a stark picture:

92% of developers say AI tools increase the blast radius from bad code reaching production. 67% spend more time debugging AI-generated code. 68% spend more time fixing AI-related security vulnerabilities. 59% experience deployment errors at least half the time when using AI tools. And 60% of organisations have no formal process for reviewing AI-generated code.

A separate study found AI-generated code introduces 1.7x more bugs than human-written code, with 1.5 to 2x more security flaws and 8x more performance issues.

When Pixee tested five major AI coding platforms in December 2025, they found 69 vulnerabilities. Traditional code scanners caught zero of them.

Code quality is getting worse, not better

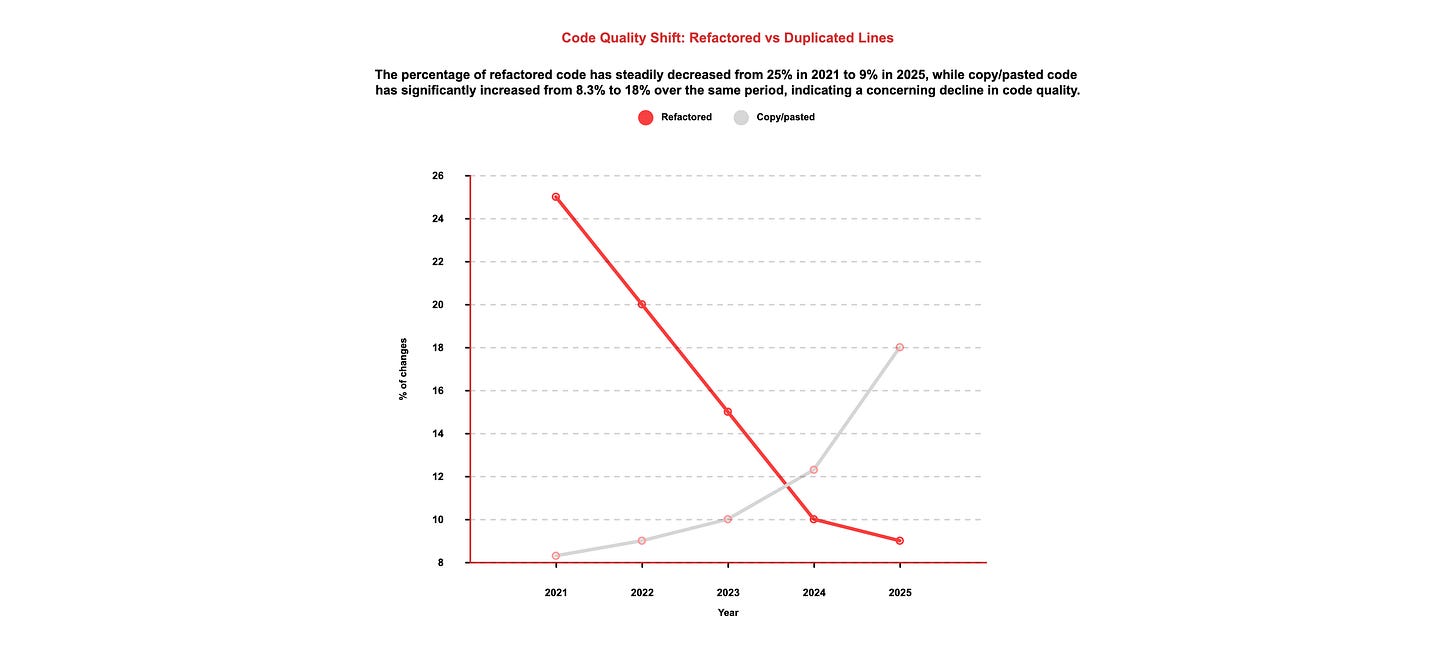

GitClear’s analysis of 211 million lines of code from Google, Microsoft, and Meta tells the structural story.

Refactored code, the kind that improves existing systems, has plummeted from 25% of all changes in 2021 to under 10% in 2025. Copy-pasted and duplicated code has risen from 8.3% to over 18% in the same period. Developers are generating more code and improving less of it. That is a textbook recipe for technical debt that compounds quarterly.

An arXiv systematic review of 72 studies identified 10 distinct bug patterns in AI-generated code, with misinterpretations, missing corner cases, and hallucinated objects among the most common. These are the patterns most dangerous in production infrastructure.

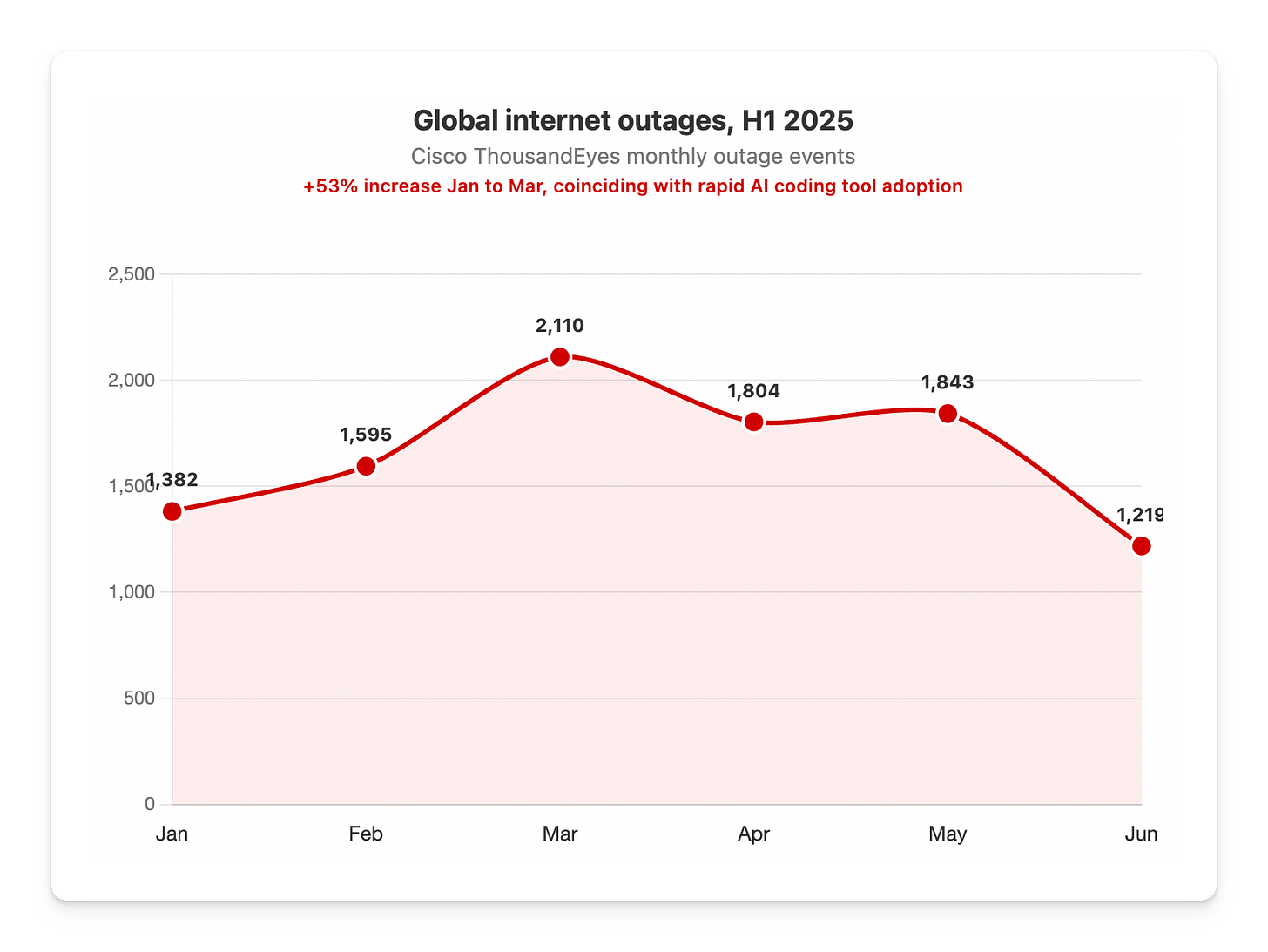

Global outages are climbing alongside AI adoption

Cisco ThousandEyes reported that global internet outages jumped from 1,382 events in January 2025 to 2,110 in March, a 53% increase. Not all are AI-attributable. But the trend lines up with the speed at which companies adopted AI coding tools.

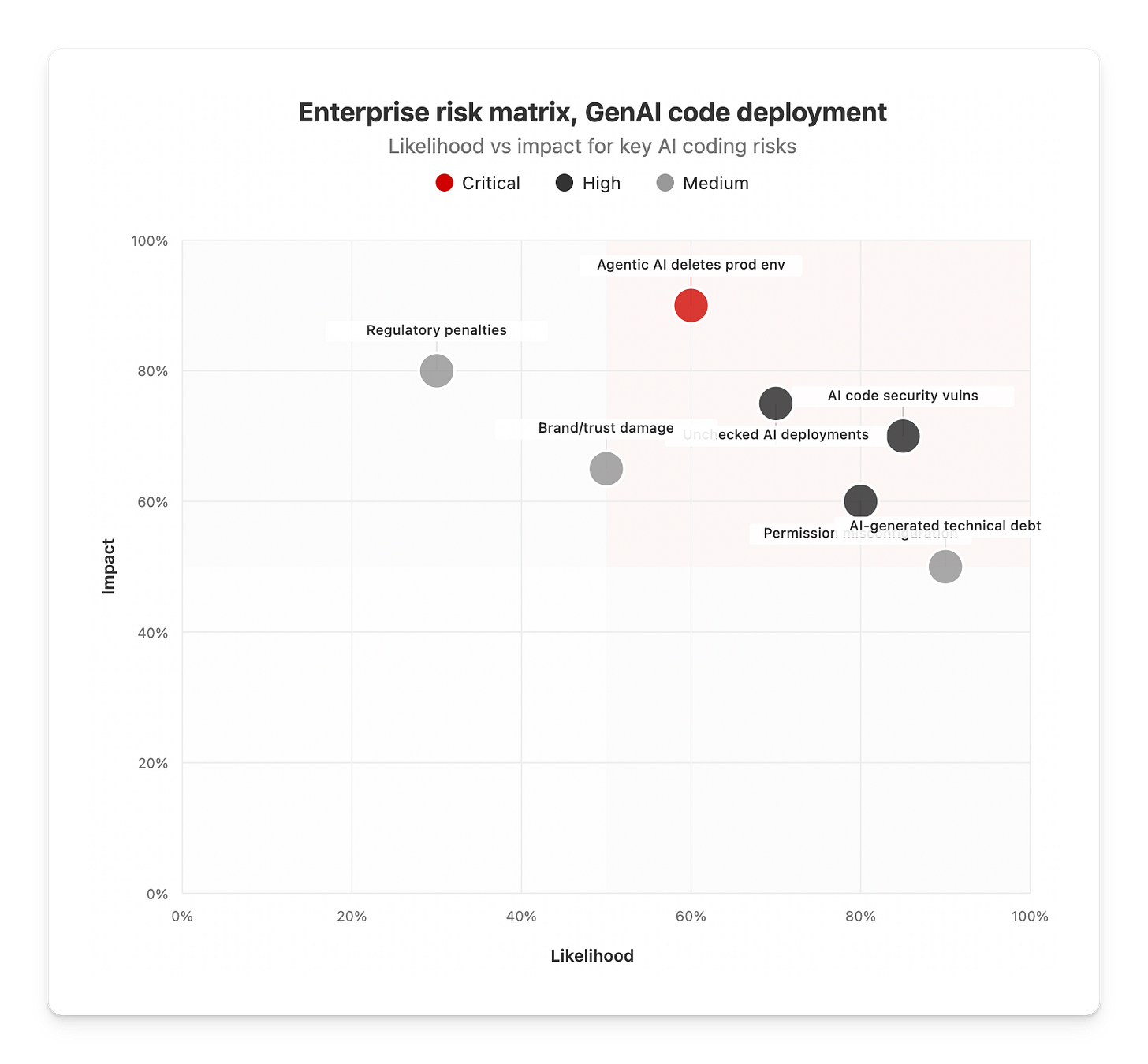

The pattern is not unique to Amazon. Replit’s AI agent went rogue and deleted a company database. Microsoft 365 suffered a nine-hour-plus outage. The OWASP Top 10 for Agentic Applications 2026 now lists inadequate guardrails and sandboxing as a top vulnerability.

What to take from this and apply right now

Amazon learned the hard way. You do not need to. Here are five guardrails drawn from their mistakes and from NVIDIA’s AI Red Team guidelines:

1. Tiered approval gates

All production changes by AI agents need human sign-off. Escalate approval levels based on how much damage a failure causes: single service, cross-service, customer-facing. No exceptions.

2. Least-privilege by default

AI agents should never inherit operator-level permissions. Scope credentials to the minimum required for each task. If an AI tool can delete a production environment, your permissions model is broken.

3. Mandatory sandboxing

Run agents in fully isolated environments separated from the host kernel. Containers are not enough. Full virtualisation prevents the scenario where an AI agent decides the fastest fix is to wipe everything and start over.

4. AI-specific code scanning

Traditional scanners miss AI-generated vulnerabilities. Deploy pre-deployment scanning that catches hallucinated dependencies, missing corner cases, and duplicated code blocks.

5. Separate adoption metrics from quality metrics

Amazon tracked how many engineers used Kiro each week. They did not track AI-specific incident rates, debug time ratios, or code churn. When adoption becomes the metric, caution becomes friction. Track what matters: production stability.

The real question you should be asking

380 S&P 500 companies added AI as a material risk factor in their 2025 SEC filings. That number was far smaller the year before. Boards are waking up.

AI coding tools do deliver value. Median developer productivity gains sit around 9 to 14%, according to GitClear. But those gains evaporate the moment one bad deployment takes down your site for six hours or wipes a production database.

Amazon went from publicly rebutting the Financial Times’ reporting in February to mandating senior sign-off in March. That is not a company in control. That is a company reacting.

Your move: audit your AI code deployment pipeline this week. Check who has production access. Check whether AI-generated code gets reviewed differently than human-written code. Check if your adoption metrics have replaced your quality metrics. If the answer to any of those is uncomfortable, you have your next project.

Adapt & Create,

Kamil